While Artificial Intelligence (AI) promises efficiency and innovation, it also introduces a new realm of challenges for corporate lawyers tasked with safeguarding data privacy and mitigating risk. Here are the top 5 concerns keeping them up at night:

1. The Data Deluge:

- AI thrives on data, often requiring access to vast troves of employee and customer information.

- Lawyers worry about:

- Compliance with data privacy regulations: Ensuring adherence to complex regulations like GDPR and CCPA is a constant juggle, especially as AI algorithms collect and analyze ever-increasing data volumes.

- Data breaches and leaks: The risk of sensitive data falling into the wrong hands through cyberattacks or accidental exposure is a major concern.

I asked Gary Kibel a partner in the Privacy +Data Security practice group of Davis + Gilbert LLP* whether or not the standard boiler plate software contract protected corporate data? He related, that in his experience many companies don’t read the fine print and their data is being used by their software vendor. Gary’s advice to software buyers “You must read the terms and conditions of any AI service used so that you understand how the service is allowed to use your information and what obligations they are assuming.”

2. Algorithmic Bias and Discrimination:

- Biases present in the data used to train AI systems can lead to discriminatory outcomes in areas like hiring, promotions, or even loan approvals.

- Lawyers fear legal repercussions due to:

- Unintentional discrimination: Unbiased data and rigorous testing are crucial to avoid violating anti-discrimination laws.

- Reputational damage: Public perception of bias, even if unintentional, can severely damage a company’s reputation.

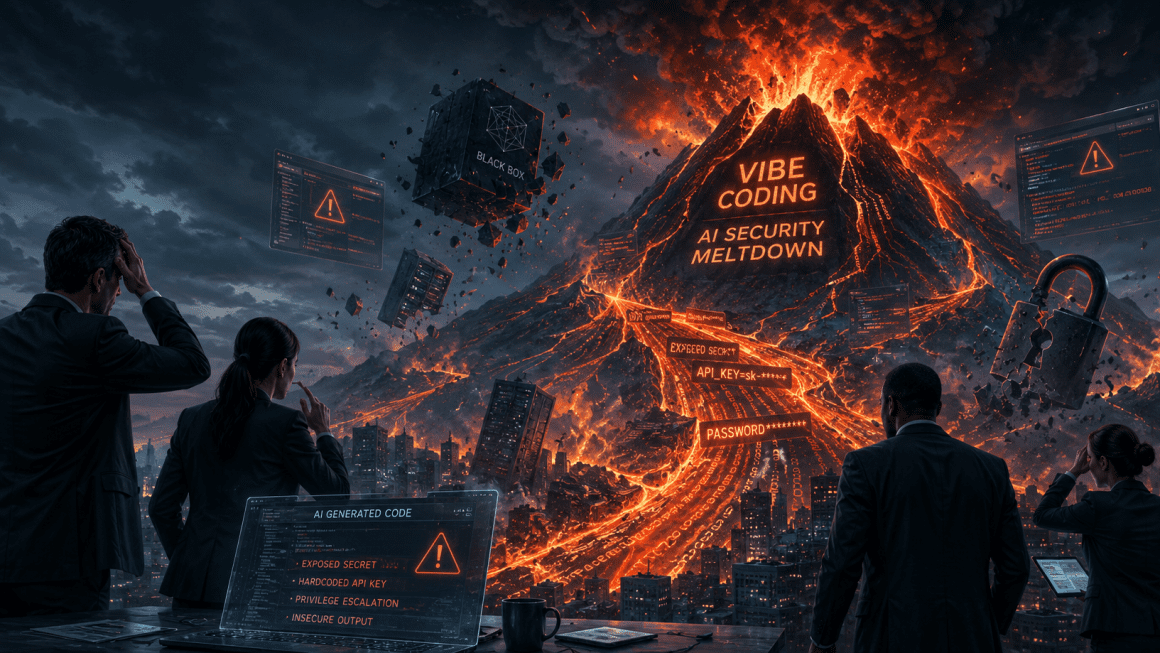

3. The Black Box Conundrum:

- Many AI systems operate as “black boxes,” making their decision-making processes opaque and difficult to understand.

- Lawyers grapple with:

- Lack of transparency: Without understanding how AI arrives at its conclusions, it’s difficult to assess its reliability and explain decisions to regulators or stakeholders.

- Accountability challenges: Holding someone accountable for AI-driven decisions becomes unclear, potentially exposing the company to legal liability.

Everyone knows by now that information generated by AI should never be trusted 100% and that a human must be used as a final check. With that joint effort in mind, I asked Gary when a client should be informed of AI usage. He said “Be transparent with your clients. A deliverable generated with AI should never be provided without advising them how it was created.”

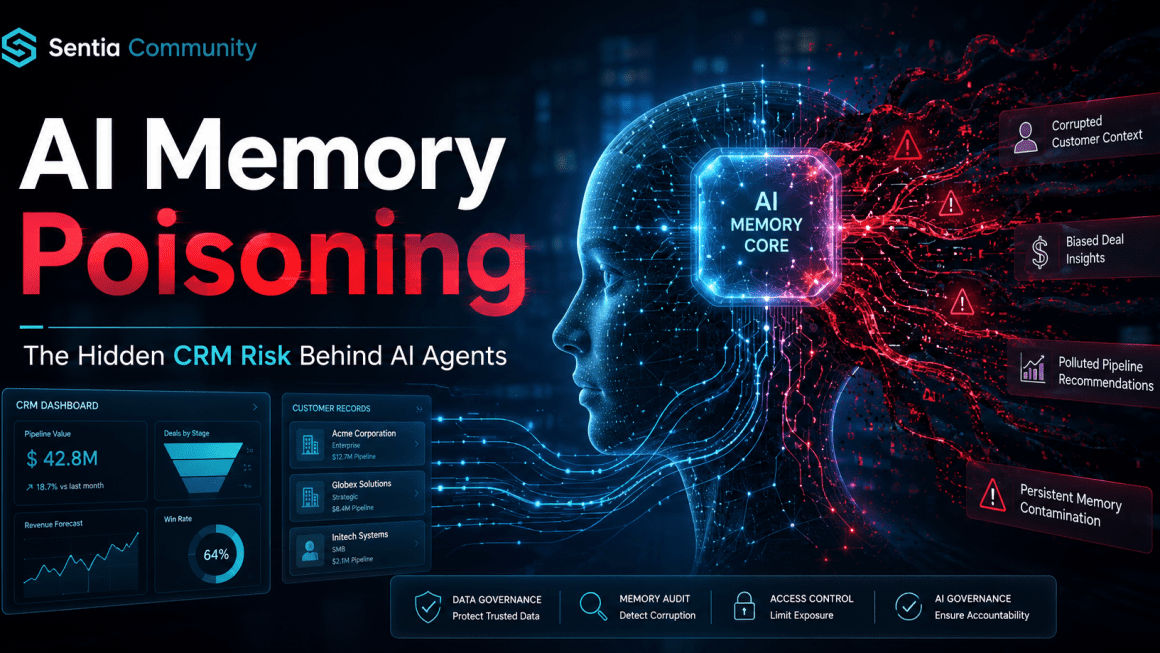

4. Ownership and Control of Data:

- When companies leverage external AI services, the question of data ownership arises.

- Lawyers are concerned about:

- Loss of control over sensitive data: Who owns the data used to train and operate the AI? Can it be used for unintended purposes without the company’s knowledge or consent?

- Compliance complexities: Ensuring adherence to data residency and transfer regulations becomes intricate when dealing with third-party AI vendors.

5. The Regulatory Wild West:

- The rapid evolution of AI has outpaced legal frameworks, leaving many legal questions unanswered.

- Lawyers are haunted by:

- Uncertainty about future regulations: Emerging regulations and potential legal interpretations surrounding AI pose challenges to proactive risk management.

- Lack of clear ethical guidelines: The absence of robust ethical frameworks can lead to unintended consequences and ethical dilemmas down the line.

Fighting Back: AI Risk Reduction Strategies for Lawyers

All of this is so new with so little legal precedent to guide the AI legal future I asked Gary what do Lawyers think about the AI legal future? Gary said: “Lawyers should never rely upon AI as the sole source of information or legal advice. While the services can be helpful, they are not a replacement for sound legal research and analysis.”

While these concerns are valid, proactive strategies can help corporate lawyers mitigate risk:

- Conduct thorough due diligence: Scrutinize AI vendors’ data security practices, data ownership policies, and compliance certifications.

- Implement robust data governance frameworks: Establish clear guidelines for data collection, storage, usage, and disposal, ensuring compliance with relevant regulations.

- Demand transparency and explainability: Insist on AI vendors providing insights into their algorithms’ decision-making processes to enhance trust and accountability.

- Stay informed and engaged: Proactively keep abreast of evolving regulations and ethical discussions surrounding AI, and contribute to shaping responsible AI development.

By actively managing these concerns and employing effective risk reduction strategies, corporate lawyers can ensure that their companies embrace the potential of AI while safeguarding data privacy, promoting ethical practices, and ultimately, sleeping a little easier at night.

Gary Kibel is a partner in the Privacy +Data Security practice group of Davis + Gilbert LLP. Mr. Kibel regularly counsels clients with respect to new media/advertising law; privacy and data security; and information technology matters. Gary has an MBA from Binghamton University and a JD from Brooklyn Law School. For Legal assistance please contact Mr. Kibel via email gkibel@dglaw.com

David is an investor and executive director at Sentia AI, a next generation AI sales enablement technology company and Salesforce partner. Dave’s passion for helping people with their AI, sales, marketing, business strategy, startup growth and strategic planning has taken him across the globe and spans numerous industries. You can follow him on Twitter LinkedIn or Sentia AI Corporate Page.

David Brown | CCO & Startup AI Investor

David Brown | CCO & Startup AI Investor