Executive Summary:

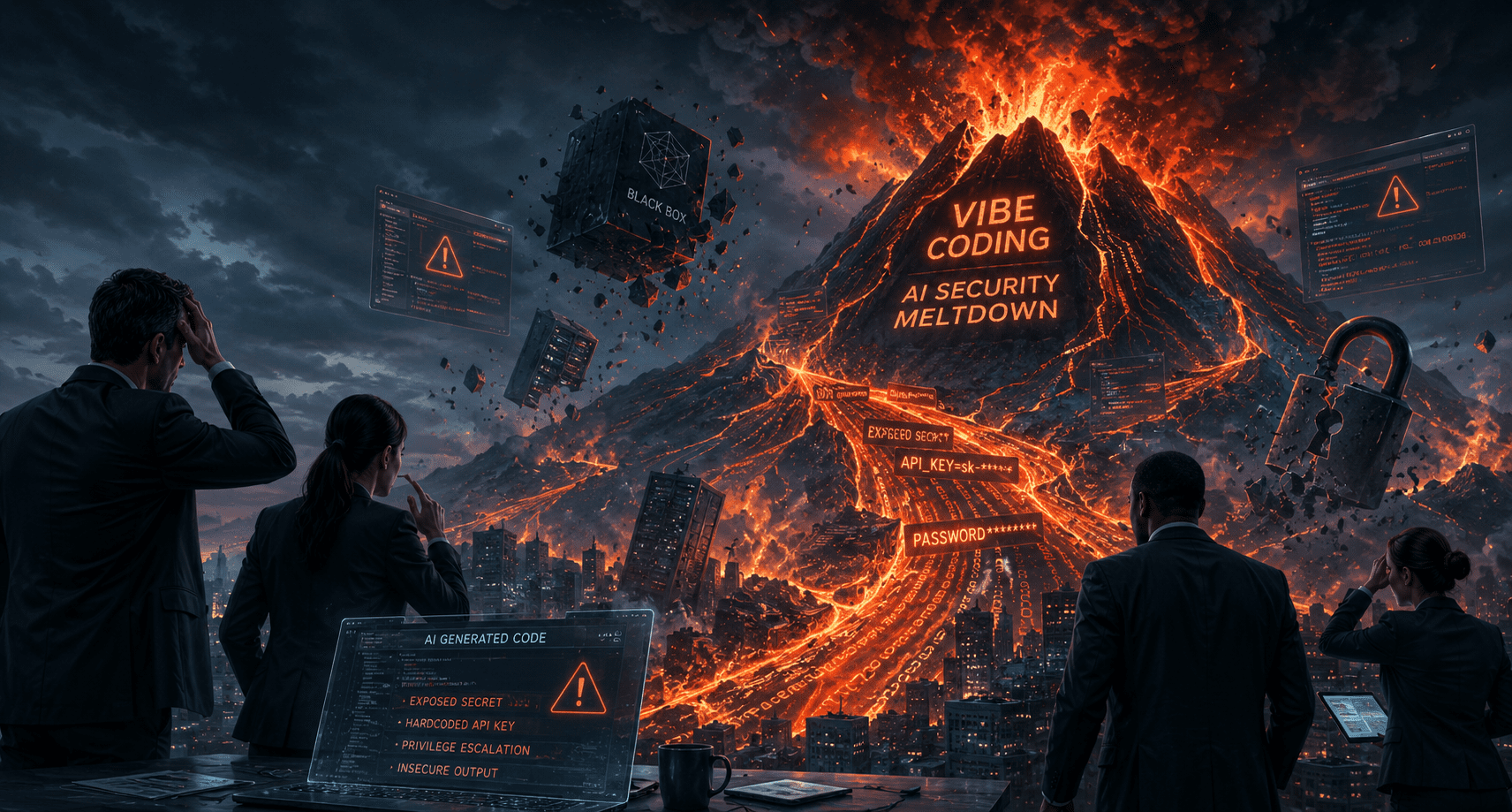

- Definition of Risk: Vibe Coding is the practice of using natural language prompts to generate software without formal engineering oversight, leading to “black box” systems that are unmanageable upon staff departure.1

- Security Vulnocalypse: AI-generated code contains 2.74x more vulnerabilities than human-written code, with a 45% failure rate on secure coding benchmarks.3

- Critical Vulnerabilities: Research indicates a 322% increase in privilege escalation paths and a 153% jump in architectural design flaws within AI-assisted codebases.3

- Secrets Sprawl: AI coding tools are twice as likely to leak secrets; in 2025, over 28 million hardcoded credentials were detected in public repositories, a 34% year-on-year increase.5

- Shadow IT Proliferation: Approximately 75% of workers use personal AI tools for work, yet less than 11% of these applications are visible to IT departments.7

- Compliance & Governance: Unsanctioned AI usage triggers “Legally Toxic AI” risks under GDPR and HIPAA, potentially leading to mandatory model deletion and massive regulatory fines.8

Table of Contents

- Anatomy

- Knowledge-walkout

- Quantifying the 2025 ‘Vulnpocalypse’

- Secrets

- Shadow-it

- Privacy

- Tech-debt

- Conclusion

The Anatomy of Vibe Coding: Logic vs. Intuition

Vibe Coding represents a fundamental shift in software development where natural language prompts replace formal logic and architectural design.1 While this modality allows for rapid prototyping, it often results in the Raph Wiggum Loop, where users repeatedly prompt an AI to fix errors without ever reviewing the underlying code.

In a large firm, this practice separates “Coding” (typing logic) from “Programming” (solving problems with structural understanding).2 When staff prioritize “vibes” over verification, they introduce Neural Networks artifacts that look functional but lack the structural integrity required for production-grade systems.

The Knowledge Walk-out Risk: Why Black Boxes Kill Memory

The most immediate operational threat is the “Knowledge Walk-out.” When an employee builds a critical internal tool using Agentic AI and then leaves the firm, they take the only working knowledge of that tool with them.10

Because vibe-coded tools are rarely committed to the firm’s approved Git infrastructure or documented, they become invisible “black boxes”.10 Research from the Software Engineering Institute suggests that firms spend 60-80% of their maintenance budgets on systems with poor documentation; vibe coding accelerates this cost exponentially.3

Without a formal Software Development Life Cycle (SDLC), there is no audit trail for future hires to follow.12 When the “vibe” breaks, the organization is left stranded with functional logic that no one understands, forcing expensive rebuilds.10

The Vulnpocalypse: Quantifying Security Risks

The year 2025 has been defined by a “vulnpocalypse” in enterprise application security. A Veracode 2025 report found that AI-assisted code contains 2.74 times more vulnerabilities than human-written code.3

| Vulnerability Type | AI Security Pass Rate | Human Baseline | Risk Multiplier |

| SQL Injection | 82-86% | 94% | 1.2x |

| Cross-Site Scripting (XSS) | 15% | 78% | 5.2x |

| Privilege Escalation | N/A | Baseline | 3.22x |

Furthermore, research across Fortune 50 companies by Apiiro revealed a 322% increase in privilege escalation vulnerabilities and a 153% spike in design flaws.3 AI models optimize for functionality, frequently skipping sanitization steps or OWASP security standards to ensure the code “just works”. As noted in the latest(https://www.veracode.com/resources/analyst-reports/2025-genai-code-security-report/), security pass rates for web vulnerabilities remain alarmingly flat despite model improvements.3

Hardcoded Secrets and API Keys: The Open Door

Vibe coding has exacerbated the “secrets sprawl” crisis. GitGuardian detected 28.65 million new hardcoded secrets in 2025, representing a 34% year-on-year increase—the largest in history.5

AI-assisted commits leak API keys and tokens at a rate of 3.2%, more than double the human baseline of 1.5%.5 This is often caused by AI models suggesting live secrets found in training data or encouraging hardcoded credentials in MCP (Model Context Protocol) configurations.

A single leaked key can grant attackers access to AWS, Stripe, or internal LLM infrastructure.15 One financial services firm reportedly faced a $2.3M regulatory fine after an AI model suggested a live, “memorized” API key to a developer.17

Shadow IT 2.0: Invisible Infrastructure & Data Leaks

Shadow IT has evolved from using unsanctioned SaaS to building unsanctioned applications. Forrester research indicates that 75% of workers now use AI on the job, but only 16% use authorized tools.7

This “Shadow AI” is invisible to traditional IT Governance because it creates no expense reports or SSO logs.7 Marketing or RevOps teams may build dashboards that query live production data without encryption or oversight.7 According to a Forrester 2026 predictions report, unmanaged AI adoption will be the primary driver of enterprise data breaches through 2027.19

Compliance & IP: The Legal Minefield of AI Code

The act of vibe coding creates immediate Information Security and privacy risks. Melbourne Business School found that 48% of employees have uploaded sensitive company data into public AI tools.21

This behavior triggers the Consent Reckoning of 2026, where legacy data practices become legally indefensible.23 Under GDPR or HIPAA, processing personal data in a public model without a Data Processing Agreement (DPA) can lead to fines of 4% of global turnover.

Additionally, vibe-coded logic often reproduces portion of GPL-licensed code, creating “viral” licensing obligations.24 In an M&A due diligence process, a vibe-coded codebase may be flagged as “unauditable,” severely devaluing the asset.2 For more on this, read our(https://sentia.community/how-to-eliminate-crm-data-silos-with-agentic-ai-in-2026/) while maintaining compliance.25

Technical Debt and AI Slop: The Maintenance Burden

Every vibe-coded script is “technical debt from day one”.26 Because these tools lack unit tests and architectural consistency, they are fragile.3 A 2026 HBR study suggests that AI doesn’t reduce work but “intensifies” it, as developers spend 66% more time fixing AI-generated “slop” that is almost—but not quite—correct.

When API formats change or a cloud provider updates a dependency, vibe-coded tools break silently.29 Without documentation, incident response takes weeks instead of hours.3 This burden eventually falls on IT teams who had no say in the tool’s creation.7

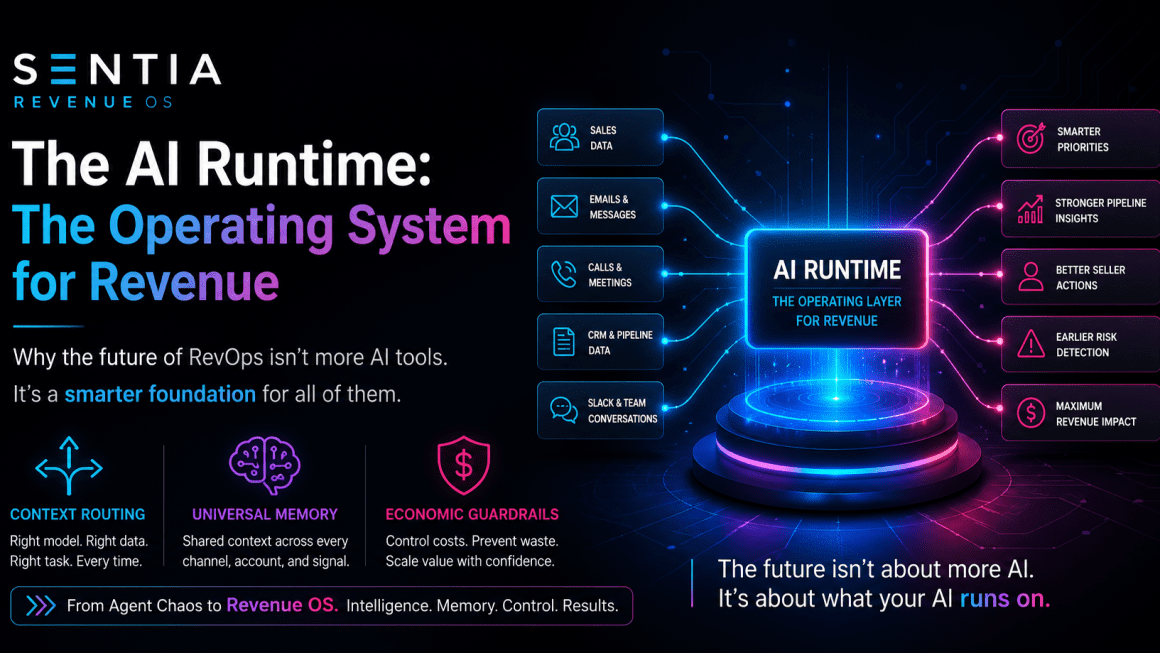

The AIOps Mandate

Large firms cannot ban AI, but they must govern it. Transitioning from “AI Theater” to a disciplined AIOps framework is the only way to realize ROI while mitigating risk.31 This requires moving away from fragmented pilots and toward a(https://sentia.community/ai-enablement-engines-for-revops-complete-guide/).11

Enterprise Recommendation:

- Establish an approved AI tooling list with enterprise DPAs.

- Mandate that all AI code is committed to the firm’s Git infrastructure.

- Apply secrets scanning (e.g., TruffleHog) at the local and repository levels.

- Require human-in-the-loop reviews for all production logic.

10-Part FAQ: The Vibe Coding Threat Landscape

What exactly is vibe coding?

Vibe coding is an AI-assisted development modality where natural language prompts replace manual coding and architectural design. While it accelerates prototyping, it often results in unsecure, undocumented, and fragile software that lacks the structural integrity required for enterprise environments.

Why is vibe coding considered “dangerous” for large firms? It creates “black box” tools that only the original creator understands. When that employee leaves, the firm loses critical institutional knowledge. Furthermore, AI-generated code is 2.74x more likely to contain severe security vulnerabilities compared to human-written code.3

How do AI coding assistants increase the risk of data breaches? AI tools are twice as likely to leak hardcoded API keys and secrets. They often suggest insecure patterns learned from public training data, and developers frequently bypass security warnings to prioritize functional speed over secure-by-design principles.1

What are “slopsquatting” and hallucinated dependencies? AI models sometimes suggest non-existent software packages. Attackers “slopsquat” by registering these names on registries like npm with malicious code. If a developer accepts the AI’s suggestion, they unknowingly integrate malware into the firm’s supply chain.10

Can vibe coding lead to regulatory fines? Yes. Uploading sensitive customer data into public LLMs violates GDPR and HIPAA requirements. Organizations may face fines up to 4% of global turnover or “algorithmic disgorgement,” where they are forced to delete models trained on non-compliant data.8

What is the impact of vibe coding on M&A due diligence? Law firms now flag AI-generated codebases as unauditable for intellectual property provenance. If a firm cannot prove it owns its code or that it is free of viral open-source licenses, it can block or devalue a transaction.2

Why does vibe coding increase technical debt? AI-generated code often lacks unit tests, documentation, and architectural consistency. This “AI slop” is difficult to scale or refactor. Developers reportedly spend 66% more time fixing “almost right” AI code than they save in the initial build.14

Does vibe coding affect CRM data integrity? Yes. Vibe-coded tools often lack robust error handling. When an underlying CRM schema changes, these tools can fail silently or produce corrupted data, destroying the “unified truth” required for accurate revenue forecasting.23

Is there a way to use AI coding safely in an enterprise? Yes, by adopting an AIOps framework. This involves using enterprise-grade AI platforms, mandating human-in-the-loop reviews, and integrating automated security gates like secrets scanning and reachability analysis into the development pipeline.31

What should leadership do to stop unsanctioned vibe coding?

Leadership should provide sanctioned, secure AI alternatives and implement clear acceptable use policies. Shifting the cost of AI security from a “CISO tax” to a business cost ensures that innovation and protection scale together.

WordPress Taxonomy:

- Primary Category: AI Governance

- Tags: Vibe Coding, Agentic AI, Information Security, Shadow IT, RevOps

Meet the Expert:

This article was authored by the Senior SEO, GEO, and AEO Architect for Sentia. Specialized in B2B SaaS and Agentic AI strategy, they help enterprise leaders liquidate data silos and build governed AIOps frameworks for the modern revenue lifecycle.

Works cited

- Vibe Coding Security Crisis: Credential Sprawl and SDLC Debt – Lab Space, accessed May 5, 2026, https://labs.cloudsecurityalliance.org/research/csa-research-note-ai-generated-code-security-vibe-coding-202/

- Vibe Coding 2025: The AI Programming Revolution That’s Making Developers Millions, accessed May 5, 2026, https://www.mergesociety.com/tech/vibe-coding

- AI-Generated Code Security Risks – Why Vulnerabilities Increase 2.74x and How to Prevent Them – SoftwareSeni, accessed May 5, 2026, https://www.softwareseni.com/ai-generated-code-security-risks-why-vulnerabilities-increase-2-74x-and-how-to-prevent-them/

- AI is fixing coding typos, but creating ‘timebombs’: report – IT Brew, accessed May 5, 2026, https://www.itbrew.com/stories/2025/09/05/ai-is-fixing-coding-typos-but-creating-timebombs-report

- The State of Secrets Sprawl 2026 | GitGuardian Annual Report, accessed May 5, 2026, https://www.gitguardian.com/state-of-secrets-sprawl-report-2026

- AI coding assistants twice as likely to leak secrets, as overall leaks rise 34% | news, accessed May 5, 2026, https://www.scworld.com/news/ai-coding-assistants-twice-as-likely-to-leak-secrets-as-overall-leaks-rise-34

- The State of Shadow AI 2026 | Data & Statistics – Unseen Security, accessed May 5, 2026, https://www.unseensecurity.ai/shadow-ai-report

- Salesforce Help – The Sentia AI Community, accessed May 5, 2026, https://sentia.community/category/salesforce-help/

- Information security management – Wikipedia, accessed May 5, 2026, https://en.wikipedia.org/wiki/Information_security_management

- How Vibe Coding Is Killing Open Source : r/programming – Reddit, accessed May 5, 2026, https://www.reddit.com/r/programming/comments/1qv8f8q/how_vibe_coding_is_killing_open_source/

- AI Enablement Engines for RevOps: The Complete Guide – The …, accessed May 5, 2026, https://sentia.community/ai-enablement-engines-for-revops-complete-guide/

- Waterfall model – Wikipedia, accessed May 5, 2026, https://en.wikipedia.org/wiki/Waterfall_model

- Software development process – Wikipedia, accessed May 5, 2026, https://en.wikipedia.org/wiki/Software_development_process

- Enterprise AI Coding Security Risks 2025: Complete Guide – Exceeds AI Blog, accessed May 5, 2026, https://blog.exceeds.ai/ai-coding-assistants-risks-2025/

- Check Point: AI coding assistants are leaking API keys – Developer Tech News, accessed May 5, 2026, https://www.developer-tech.com/news/check-point-ai-coding-assistants-leaking-api-keys/

- Caught in the Hook: RCE and API Token Exfiltration Through Claude Code Project Files | CVE-2025-59536 | CVE-2026-21852 – Check Point Research, accessed May 5, 2026, https://research.checkpoint.com/2026/rce-and-api-token-exfiltration-through-claude-code-project-files-cve-2025-59536/

- Model Context Protocol (MCP): How AI Integration Transforms Financial Services Roles in 2025 – Daloopa, accessed May 5, 2026, https://daloopa.com/blog/analyst-best-practices/the-mcp-revolution-how-model-context-protocol-will-transform-finance-roles

- The Boardroom Brief: The Complete Guide to Compliance as Competitive Advantage | by Piyoosh Rai | Medium, accessed May 5, 2026, https://medium.com/@piyooshrai/the-boardroom-brief-the-complete-guide-to-compliance-as-competitive-advantage-99bb16ddc2eb

- The Future Of Risk Management – Forrester, accessed May 5, 2026, https://www.forrester.com/technology/risk-management/

- Predictions 2024: Generative AI Transitions From Hype To Intent – Forrester, accessed May 5, 2026, https://www.forrester.com/blogs/predictions-2024-artificial-intelligence/

- Global study reveals trust of AI remains a critical challenge, accessed May 5, 2026, https://mbs.edu/news/global-study-reveals-trust-of-ai-remains-a-critical-challenge

- Australian Story – Melbourne Business School, accessed May 5, 2026, https://mbs.edu/faculty-and-research/trust-and-ai/australian-story

- Category: Data Cleaning – The Sentia AI Community, accessed May 5, 2026, https://sentia.community/category/data-cleaning/

- The State of Secrets Sprawl 2026: AI-Service Leaks Surge 81% and 29M Secrets Hit Public GitHub – GitGuardian Blog, accessed May 5, 2026, https://blog.gitguardian.com/the-state-of-secrets-sprawl-2026/

- How to Eliminate CRM Data Silos with Agentic AI in 2026 – The …, accessed May 5, 2026, https://sentia.community/how-to-eliminate-crm-data-silos-with-agentic-ai-in-2026/

- Vibe Coding For Startups | 2026 EDITION – Mean CEO’s BLOG, accessed May 5, 2026, https://blog.mean.ceo/vibe-coding-for-startups/

- Zero Code vs Vibe Coding For Startups | 2026 EDITION – Female Entrepreneurs, accessed May 5, 2026, https://blog.mean.ceo/zero-code-vs-vibe-coding/

- What Are the Most Effective Insider Threat Matrix™ & Behavioral Analytics Solutions for Enterprises in 2025?, accessed May 5, 2026, https://www.insiderisk.io/research/insider-threat-matrix-behavioral-analytics-enterprise-solutions-2025

- The Hands-Free Rep: Voice Workflows for Real-Time CRM Updates, accessed May 5, 2026, https://sentia.community/the-hands-free-rep-voice-workflows-for-real-time-crm-updates/

- Chief revenue officer – Wikipedia, accessed May 5, 2026, https://en.wikipedia.org/wiki/Chief_revenue_officer

- The Pivot from Experimentation to P&L Impact – The Sentia AI …, accessed May 5, 2026, https://sentia.community/stop-celebrating-ai-pilots-its-time-to-talk-about-ai-operations/

- AI Coding Assistants in 2026: 4× Faster, 10× Riskier. The Hidden Security Cost – Kusari, accessed May 5, 2026, https://www.kusari.dev/blog/ai-coding-assistants-in-2026-4x-faster-10x-riskier-the-hidden-security-cost

How can you Build an AI Orchestration Score Card for AI Sales Teams?, accessed May 5, 2026, https://sentia.community/how-do-you-build-an-ai-orchestration-score-card-for-ai-sales-teams/

David Brown | CCO & Startup AI Investor

David Brown | CCO & Startup AI Investor