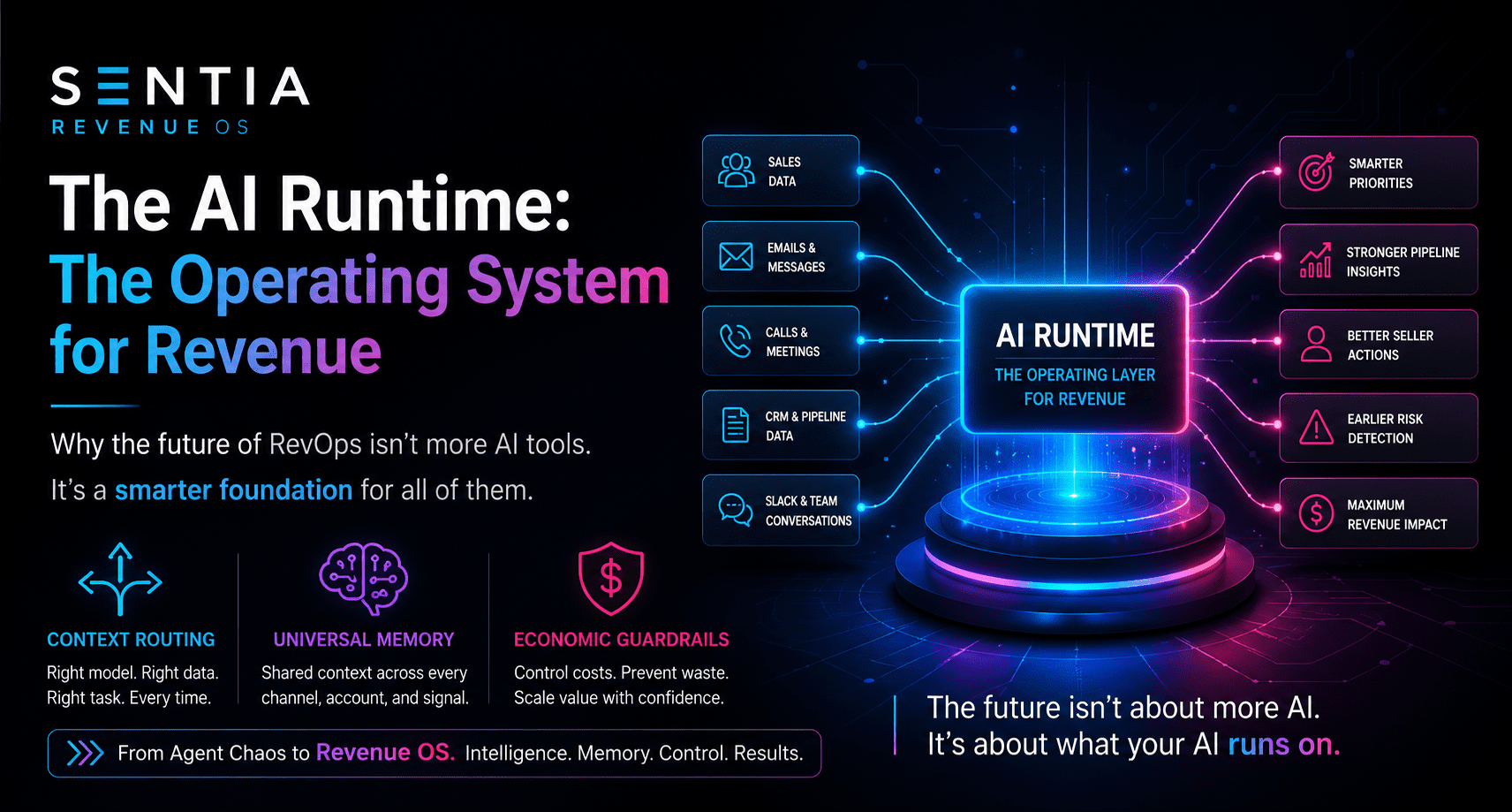

Key takeaway: The next major shift in revenue operations will not be won by companies that simply add more AI agents. It will be won by companies that control what those agents run on: a centralized AI Runtime with context routing, universal memory, and economic guardrails.

The AI Runtime: Why We’re Building the Operating System for Revenue

For the last two years, most of the AI conversation has focused on the model.

Which large language model is smartest? Which one writes better emails? Which one summarizes calls faster? Which one can reason, plan, code, research, or sell?

Those questions matter, but they are no longer the most important questions.

In revenue operations, the larger issue is not whether AI can perform a task. It is whether AI can operate safely, economically, and intelligently across the entire business.

That is why Sentia is focused on the AI Runtime.

Not another chatbot. Not another isolated agent. Not another point solution that performs one narrow workflow inside one disconnected application.

The AI Runtime is the operating layer that allows intelligence to move through the revenue organization with memory, context, governance, and control.

Executive Summary

- The model is not enough. Large language models are the brain, but revenue teams need a nervous system that connects data, workflows, memory, and action.

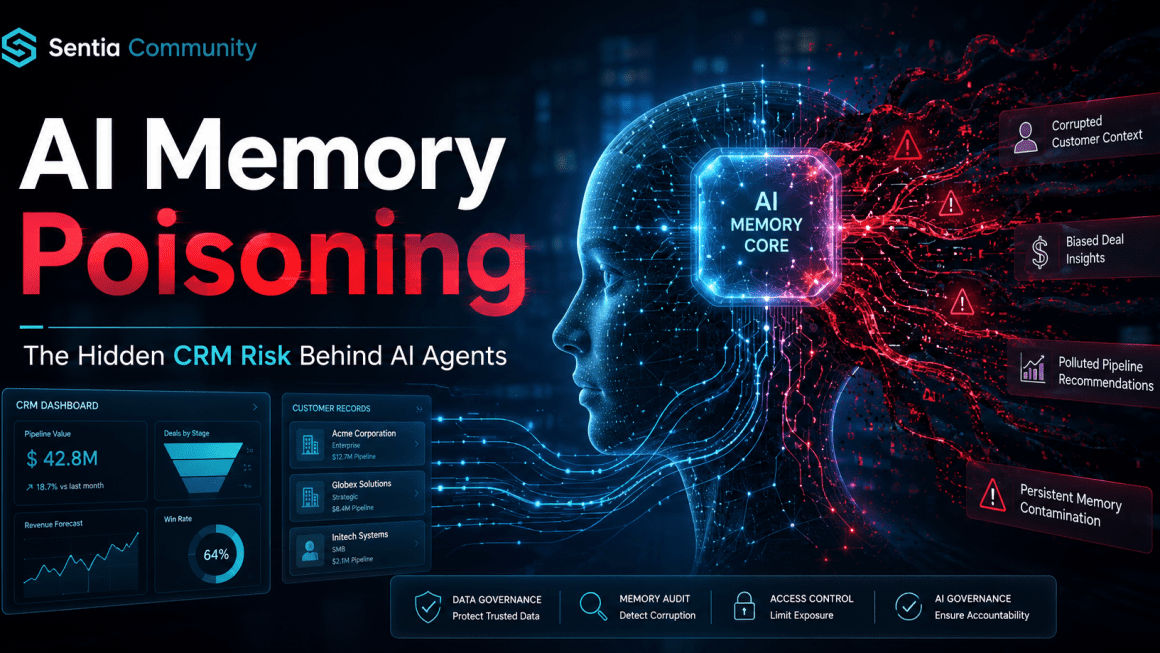

- Agent chaos is the enemy. Siloed AI apps create brittle, expensive, and insecure workflows because each agent operates with limited context.

- The runtime is the solution. A centralized runtime layer can manage context routing, universal memory, permissions, costs, and workflow execution.

- The future is an operating system for revenue. By 2026, the key question will not be “Which AI are you using?” It will be “What is your AI running on?”

The Problem: The Agent Chaos Trap

Most companies are currently stuck in what we might call the “app phase” of AI adoption.

They add an AI tool for lead scoring. Then another for email drafting. Then another for call summaries. Then another for forecasting, research, enablement, data cleanup, pipeline updates, and meeting preparation.

Each tool looks useful in isolation. Each one promises productivity. Each one appears to solve a specific problem.

But together, they create a new operating problem.

The lead scoring agent cannot see the email that was just sent. The email drafting agent may not know the latest negotiation status. The meeting preparation tool may miss the context buried in Slack, call transcripts, CRM notes, or LinkedIn messages.

Instead of one intelligent operating environment, the business ends up with a patchwork of disconnected AI apps.

What agent chaos creates

- Context loss: Agents make decisions without seeing the complete customer or account picture.

- Redundant token spend: Multiple tools repeatedly process the same information in different places.

- Inconsistent memory: One agent remembers something another agent cannot access.

- Workflow fragmentation: AI creates output, but the business still has to manually coordinate action.

- Revenue leakage: Follow-ups, risks, buying signals, and commitments fall through the cracks.

This is the trap: companies believe they are becoming more intelligent by adding more agents, but they are often just creating more disconnected software.

The Runtime Shift: From AI Apps to AI Infrastructure

Every major computing era eventually moves from applications to infrastructure.

Personal computing needed an operating system. Cloud computing needed cloud infrastructure. Mobile computing needed app platforms, identity, notifications, permissions, and device-level services.

Agentic AI now needs the same kind of operating layer.

The AI Runtime sits between the AI models and the business systems. It governs how intelligence accesses data, retains memory, chooses models, triggers workflows, manages costs, and executes revenue actions.

In simple terms, the model is the brain. The runtime is the nervous system.

1. Context Routing: The Right Intelligence for the Right Task

Not every revenue task requires the most powerful model available.

Updating a lead field, summarizing a short email, classifying a task, or checking a simple status does not need the same compute as complex negotiation analysis or multi-account strategy synthesis.

Without a runtime layer, companies often overuse heavyweight models for lightweight work. That drives unnecessary cost and slows down operations.

Context routing solves this by dynamically matching each task to the right model, tool, permission level, and data source.

What context routing enables

- Simple tasks can be routed to faster, lower-cost models.

- Complex tasks can be reserved for more capable reasoning models.

- Sensitive tasks can be routed through stricter permission and governance rules.

- Revenue-critical decisions can use richer customer and pipeline context.

Key takeaway: Context routing helps optimize the token budget by matching task complexity to model capability automatically.

2. Universal Memory: Stop Operating with Goldfish AI

Most AI tools still behave like they have goldfish memory.

They may understand the task in front of them, but they often lack persistent awareness of the customer, account, opportunity, communication history, commitments, and business context.

In revenue operations, that is a serious limitation.

A prospect does not experience your company as a series of disconnected tools. They experience one relationship. Your AI should understand that relationship the same way.

Universal memory gives agents access to a shared cognitive layer across CRM data, email threads, call transcripts, LinkedIn messages, notes, tasks, Slack conversations, calendars, and pipeline history.

Why universal memory matters

- An AI agent can understand what happened before recommending what should happen next.

- Sales, marketing, customer success, and leadership can operate from shared context.

- Customer interactions become more consistent across channels.

- Follow-ups become more relevant because the system remembers prior commitments and signals.

Key takeaway: Universal memory eliminates data silos and creates a seamless intelligence graph for every prospect, customer, account, and opportunity.

3. Economic Guardrails: Controlling the Cost of Agentic AI

Agentic AI can create enormous value, but it can also quietly consume enormous operating expense.

Autonomous agents can loop. They can call APIs repeatedly. They can process the same information multiple times. They can escalate simple tasks to expensive models. They can generate hidden infrastructure costs that leaders only discover after the budget has already been impacted.

That is why economic guardrails are essential.

The runtime must actively monitor token usage, API calls, model selection, task repetition, workflow duration, and cost thresholds in real time.

What economic guardrails protect against

- Runaway agent loops

- Duplicate processing

- Unnecessary heavyweight model usage

- Unexpected API spend

- Uncontrolled autonomous workflows

Key takeaway: Economic guardrails make autonomous AI more predictable, scalable, and commercially viable.

Why This Matters for Revenue Operations

Revenue operations is uniquely exposed to the agent chaos problem because RevOps sits at the center of customer data, process design, pipeline visibility, forecasting, sales execution, marketing handoffs, and customer lifecycle management.

If AI is deployed in disconnected pockets, RevOps becomes harder to govern, not easier.

But when AI runs through a shared runtime layer, RevOps becomes the control plane for intelligent revenue execution.

A revenue AI runtime can help teams

- Prioritize accounts based on real-time signals

- Identify stalled opportunities before they are lost

- Surface customer risks across channels

- Recommend next best actions for sellers and account managers

- Reduce manual CRM hygiene work

- Improve forecasting context

- Connect insights directly to execution

The value is not simply that AI can write, summarize, or classify.

The value is that AI can help operate the revenue system itself.

The Sentia Vision: The Revenue OS

At Sentia, we are not building better bots for the sake of building better bots.

We are building toward a Revenue OS: a system that governs how intelligence flows through the revenue organization.

That means AI should not sit outside the workflow waiting for prompts. It should operate inside the flow of revenue work, with the right memory, permissions, context, and financial controls.

In the 1990s, businesses needed Windows for the PC era. In the 2010s, they needed AWS for the cloud era. In the agentic AI era, revenue teams will need a runtime to manage intelligence.

The companies that win will not be the ones with the most AI tools. They will be the ones with the best AI operating layer.

Final Thought

The question is no longer only, “Which AI are you using?”

The more important question is, “What is your AI running on?”

Because the future of revenue operations will not be powered by disconnected agents. It will be powered by an intelligent runtime that connects context, memory, economics, and action.

Frequently Asked Questions

What is an AI Runtime?

An AI Runtime is the operating layer that sits between AI models and business systems. It manages how agents access context, retain memory, select models, control costs, and execute workflows.

Why does revenue operations need an AI Runtime?

Revenue operations depends on connected data, consistent process, pipeline visibility, and coordinated execution. A runtime helps prevent disconnected AI tools from creating fragmented workflows, duplicated effort, and inconsistent customer context.

What is agent chaos?

Agent chaos happens when companies deploy multiple isolated AI agents that each have their own memory, data access, workflow logic, and cost structure. This can create context loss, redundant spend, poor governance, and missed revenue signals.

What is context routing?

Context routing is the process of matching each AI task to the right model, data source, permission level, and workflow path based on the complexity and sensitivity of the task.

What is universal memory for AI agents?

Universal memory is a shared intelligence layer that allows AI agents to remember and use relevant context across CRM records, emails, calls, messages, notes, tasks, calendars, and other business systems.

Why are economic guardrails important for agentic AI?

Economic guardrails help prevent runaway token spend, redundant API calls, unnecessary use of expensive models, and uncontrolled agent loops. They make AI automation more predictable and commercially sustainable.