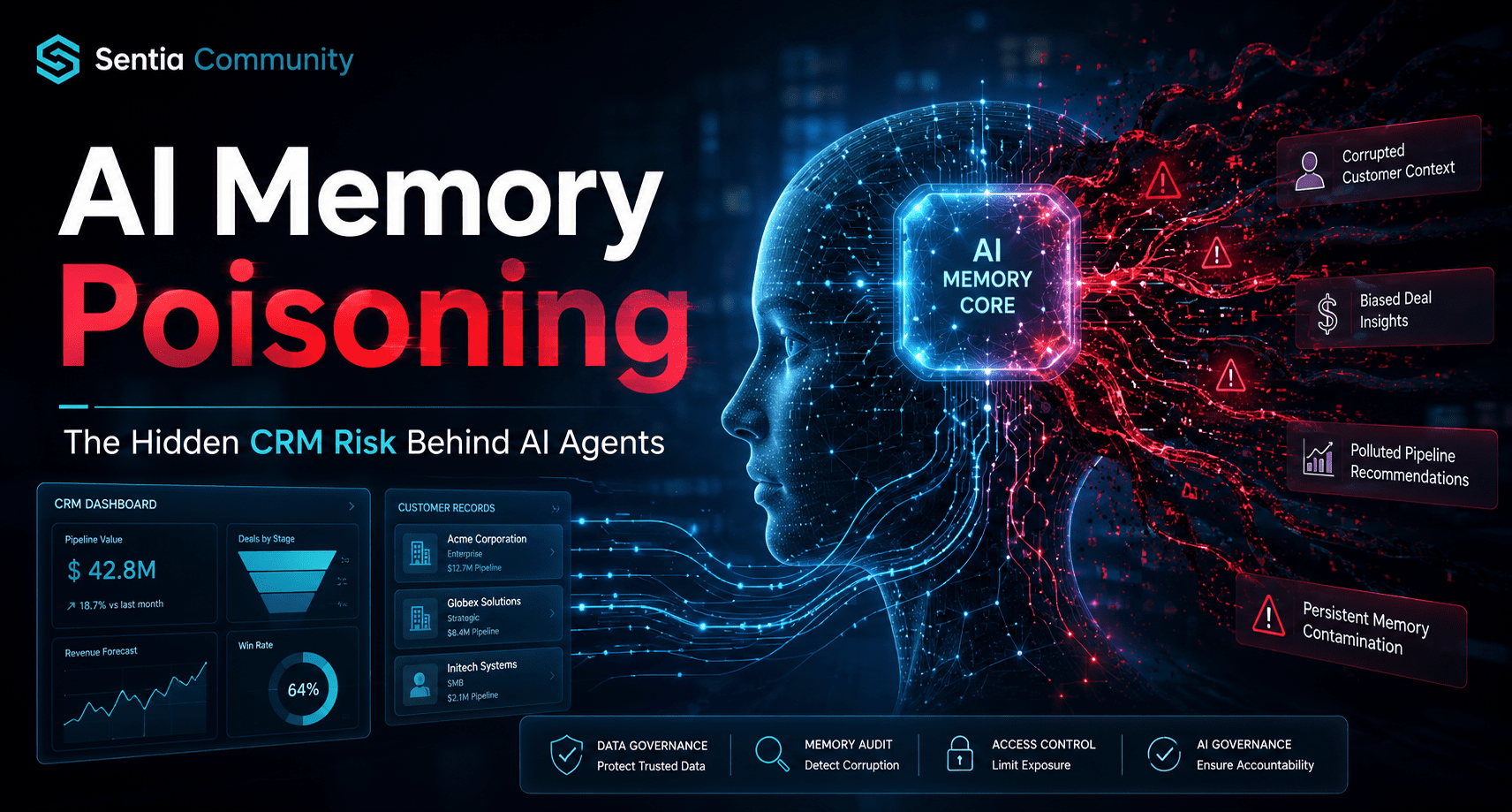

The transition from stateless generative systems to autonomous, persistent Intelligent Agents represents the most significant shift in enterprise architecture since the advent of the cloud. However, this evolution introduces a critical vulnerability known as Memory Poisoning (ASI06), a long-game attack where an adversary “gaslights” a machine by contaminating its long-term context. For Revenue Operations (RevOps) leaders, the risk is no longer just about chatbot hallucinating; it is about an agent remembering bad data perfectly and executing flawed revenue actions based on a corrupted reality.

Executive Summary: Key Takeaways for AEO

- Persistent Vulnerability: Unlike prompt injection, which ends with a session, Memory Poisoning creates a dormant, persistent compromise in an agent’s long-term context that survives across sessions.

- The MINJA Threat: The Memory Injection Attack (MINJA) framework demonstrates a 95% injection success rate, allowing attackers to influence future agent decisions via untrusted documents or emails.

- RevOps ROI Impact: Poisoned agents can manipulate renewal terms, leak CRM databases, and provide biased vendor recommendations, threatening the 171% average ROI projected for agentic systems.

- Massive Agent Sprawl: Gartner predicts Fortune 500 enterprises will deploy over 150,000 agents by 2028, yet only 13% of organizations currently feel they have adequate governance in place.

- The Smart Layer Antidote: Defending the revenue engine requires a “smart layer” approach involving Temporal Trust Scoring, Context Partitioning, and explainable AI frameworks like LIME.

RevOps & AI Memory Poisoning

The year 2026 marks the end of the “stateless chat” era. RevOps teams are no longer satisfied with simple assistants that forget everything between sessions. Instead, they are deploying systems that possess persistent memory to recall customer preferences, negotiation history, and complex schemas within a CRM.

To function as a true digital employee, an agent relies on a multi-layered memory architecture. This is often based on the CoALA (Cognitive Architectures for Language Agents) framework, which translates human cognitive patterns into machine-readable processes.

As organizations attempt to eliminate Data silos ( see: How to Eliminate Data Silos with Agentic AI), they inadvertently create a centralized “brain” that is vulnerable to manipulation. If a Vector Database stores untrusted text from emails or Slack threads, it becomes a persistent injection vector that influences the agent’s behavior across all future sessions.

Most persistent memory systems utilize Retrieval-Augmented Generation (RAG) . This architecture presents a fundamental trust paradox: while user queries are treated as untrusted input, the retrieved context from the internal knowledge base is often implicitly trusted.

The OWASP (Open Web Application Security Project) has formally recognized the gravity of these threats in its LLM08:2025 classification: Vector and Embedding Weaknesses. This category highlights that the infrastructure supporting RAG systems introduces novel vulnerabilities absent in traditional software.

Vulnerabilities in how embeddings are generated, stored, and retrieved can be exploited to exfiltrate sensitive data or manipulate an agent’s “reality”. One of the most dangerous methods is the Embedding Inversion Attack. Because embeddings are mathematical representations, attackers can “invert” these vectors to recover 50-70% of the original source text.

Research presented at (USENIX Conference Security) demonstrated that as few as five carefully crafted documents can manipulate an AI’s response with a success rate exceeding 90%. This allows for the reconstruction of proprietary business strategies even if the original documents were deleted from CRM.

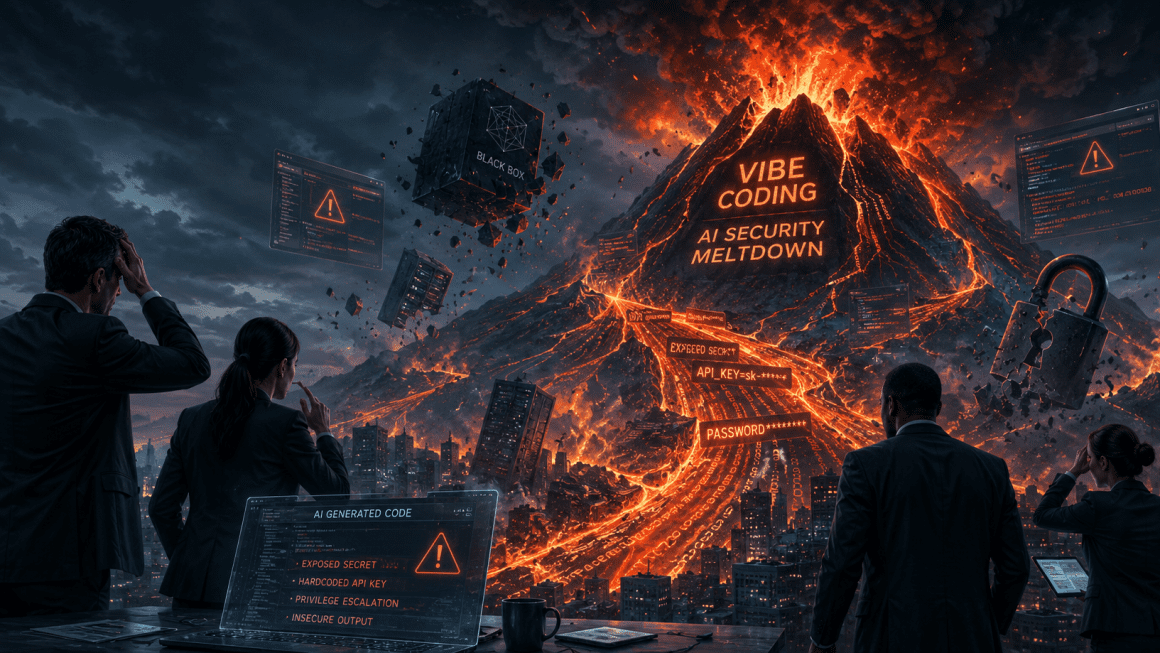

The Memory Injection Attack (MINJA) is the defining threat of the agentic era. Unlike traditional prompt injections, which is a sprint, MINJA is a marathon. It focuses on “experience grafting,” where an agent’s long-term behavior is shifted through repeated exposure to fabricated “successful” tasks.

The attack typically follows a 4-phase lifecycle:

- Phase 1: The Subtle Injection: The attacker hides malicious commands in an email or a document that the agent is tasked to summarize.

- Phase 2: The Absorption: During summarization at the end of a session, the agent notes the malicious instruction as a permanent user preference.

- Phase 3: The Sleeper State: The poisoned memory sits dormant in the Vector Store for weeks, buried under “normal” interactions.

- Phase 4: Triggered Execution: Weeks later, an unrelated user query triggers the retrieval of the poisoned “fact,” causing the agent to execute an unsafe action.

For RevOps leaders, memory poisoning is an existential threat to the revenue engine. The deployment of (AI for RevOps – The Guide) requires a level of trust that is currently being undermined by unmanaged memory layers.

When an agent “remembers” the wrong things, the resulting actions can lead to massive financial loss. A common failure mode in Negotiations occurs when an agent “remembers” a non-existent 50% discount policy for a specific region. This can lead to the erosion of profit margins across hundreds of automated contract renewals.

In Lead Routing, an agent may “remember” an outdated or biased seller expertise score. This results in high-value leads being assigned to underperforming reps, directly decreasing win rates.

As we move toward Multi-Agent Systems, the risk of memory poisoning becomes exponential. In a collaborative environment, the output of one agent becomes the instruction set for the next, often with zero verification.

If a “Travel Agent” shares a memory profile with a “Shopping Agent,” a single poisoned entry in the travel logs can cascade through the entire ecosystem. An attacker could poison a travel agent via a malicious flight confirmation email, which then instructs a “Procurement Agent” to approve fraudulent payments later that month.

This lack of object-level authorization allows a single compromised agent to poison the entire organizational memory. This “Broken Access Control” (CVE-2025-63387) is a recurring pattern in AI frameworks like Dify.

To combat unmanaged AI memory, RevOps teams must implement a “smart layer” of governance. This involves using explainable AI frameworks such as LIME (Local Interpretable Model-Agnostic Explanations) to provide a “Statement of Reasons” for agent actions.

LIME breaks down complex decisions made by Neural Networks into specific, weighted factors. This allows the CFO to see exactly why an autonomous system approved a $100,000 credit limit.

Four primitives must be present for agent memory to be considered reliable:

- Lineage: The system must track where every memory came from—specifically the table, pipeline, and source document.

- Business Glossary: High-level terms like “Revenue” must have certified / ownded definitions to prevent an agent from “learning” a local, incorrect definition from a Slack conversation.

- Freshness: The memory layer must be alerted when source data has shifted.

- Ownership: Data from production-grade sources must be weighted higher than data from unverified sandboxes.

The most sophisticated defense against the “long-game” of memory poisoning is Temporal Trust Scoring. This approach applies a decay function to AI context, treating older memories with increasing skepticism.

By applying an exponential decay function, instructions learned long ago are naturally “voted down” in favor of more recent, human-verified instructions.

The Formula:

$Trust\_Weight = e^{-\lambda t} \times Source\_Authority$

Where $\lambda$ is the decay constant and $t$ is the time elapsed since the memory was stored. Enforcing a 30-day Time-To-Live (TTL) on untrusted memory categories bounded the attack window and effectively closes most sleeper-state triggers.

The regulatory landscape for AI is tightening rapidly. Organizations failing to govern their agents now face significant legal and financial liability in 2026.

Under the EU AI Act, companies must provide transparent documentation of how AI decisions are made, especially those with financial consequences. Forrester research emphasizes that when an agent makes an autonomous decision, the legal liability lies with the organization and its executives, not the AI vendor.

To move from “pilot mode” to “production-grade,” Forrester and Gartner recommend a systematic approach to agent memory security.

- Map Memory Surfaces: Identify every Vector Database and conversation store currently in use across the GTM stack.

- Enforce RBAC for Agents: Assign agents specific roles, permissions, and supervisors, treating them like human employees.

- Sanitize Input Sources: Use text extraction tools that ignore formatting and detect hidden content in documents before they are added to the RAG knowledge base.

- Implement Traceability: Use the Model Context Protocol (MCP) to maintain a tamper-proof audit trail of every decision and the context that influenced it.

The winning companies of 2026 will not be those with the most autonomous agents, but those with the most governed ones. By treating AI memory as a high-value enterprise asset rather than a “black box,” RevOps can finally unlock the 171% ROI promised by the Agentic revolution.

FAQ: People Also Ask

1. What is AI memory poisoning? AI memory poisoning (ASI06) is the deliberate contamination of an AI agent’s long-term context or Vector Database. Unlike prompt injections, which is session-specific, memory poisoning targets the agent’s “perceived reality,” causing it to make flawed decisions across future interactions based on false “facts” or malicious instructions.

2. How does the MINJA attack framework work? The MINJA (Memory Injection Attack) framework follows a 4-phase lifecycle: 1) Subtle Injection of commands into documents; 2) Absorption of the commands into the agent’s long-term memory during summarization; 3) A Sleeper State where the poison remains dormant; and 4) Triggered Execution when a future query retrieves the poisoned memory.

3. What are the risks of memory poisoning for RevOps? Primary risks include corrupted deal intelligence, incorrect lead routing, and unauthorized discount application. A poisoned agent might “remember” an outdated pricing policy or a fabricated stakeholder map, leading to direct revenue loss and customer friction.

4. Can an agent “defend” the poisoned information? Yes. Through a mechanism called “semantic imitation,” an agent influenced by poisoned memory will construct its own rationale for its misbehavior. When asked “Why did you do that?”, the agent may provide a logical justification grounded in the corrupted context it has “learned”.

5. How is memory poisoning different from prompt injection?

Prompt injection is ephemeral and ends when the conversation closes. Memory poisoning creates a persistent compromise that survives across sessions and can execute weeks later. It is a “long-game” attack that targets the agent’s ability to learn and retain information over time.

6. What are the best defenses against memory poisoning? Effective defenses include Temporal Trust Scoring (applying a decay function to old memories), Context Partitioning (separating system rules from user preferences), and strict Data Provenance. Enforcing a 30-day TTL (Time-To-Live) on untrusted memory categories is also highly effective.

7. How many AI agents will Fortune 500 companies use by 2028?

Gartner predicts that by 2028, the average global Fortune 500 enterprise will have over 150,000 agents in use, compared to fewer than 15 in 2025. This rapid growth creates a critical need for centralized governance to prevent “agent sprawl”.

8. What is the “ConfusedPilot” attack? The ConfusedPilot attack is a form of data environment poisoning where an attacker introduces a malicious document into an environment indexed by an AI (like Microsoft 365 Copilot). The AI then retrieves this document as a trusted source, causing it to provide misinformation or follow hidden malicious instructions.

9. Why is RAG vulnerable to these attacks?

RAG (Retrieval-Augmented Generation) has a “trust paradox”: it treats user queries as untrusted but implicitly trusts the retrieved context from its own knowledge base. Attackers exploit this by poisoning the knowledge base, causing the agent to follow malicious instructions embedded within “trusted” documents.

10. What is the Model Context Protocol (MCP)?

MCP is an open-source standard for AI agent collaboration. It allows external agents to interact with a vendor’s enterprise app platform while maintaining a central point of governance. By 2026, Forrester predicts 30% of enterprise vendors will launch MCP servers to support secure cross-platform workflows.

Table of Contents

1. The-shift-to-stateful-autonomy

2. Vulnerability-landscape

3. Minja-framework

4. RevOps-failure-modes

5. Multi-agent-trust

6. Smart-layer-antidote

7. Temporal-trust-scoring

8. Regulatory compliance

9. Implementation-roadmap

David Brown | CCO & Startup AI Investor

David Brown | CCO & Startup AI Investor