G’day readers! Today, let’s delve into the world of artificial intelligence and unravel the complexities of shared tenancy models versus what I like to call siloed LLMs. As a thought leader in this space, I’m keen to shed light on why grasping these distinctions is crucial, especially considering the concerns I have about many SaaS companies seemingly not taking these matters as seriously as they should and offering solutions that are not truly mitigating the risks.

The AI Landscape: A Bird’s Eye View

Artificial Intelligence has become the driving force behind innovation, transforming the way businesses operate and make decisions. Within this AI landscape, most companies that offer AI, utilize APIs to shared “Large Language Models” (LLMs). I see a distinct issue with this and would like to explore the alternative approach that has emerged – siloed LLMs and other AI models.

The Shared Tenancy Conundrum:

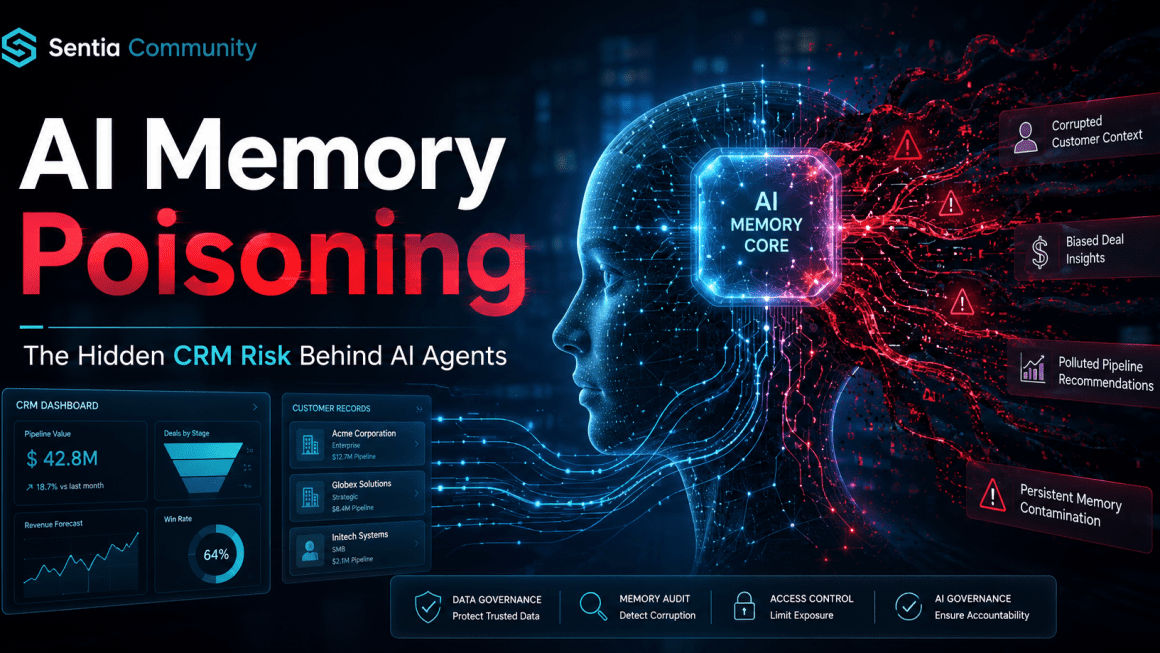

Shared tenancy models involve multiple organizations sharing a common AI model fed with data from their respective enterprise systems. It’s like a shared workspace for AI, where diverse data mingles in pursuit of collective insights. However, despite this, concerns arise, particularly in the context of data security and obvious patterns emerging.

Why I Stand as a Thought Leader:

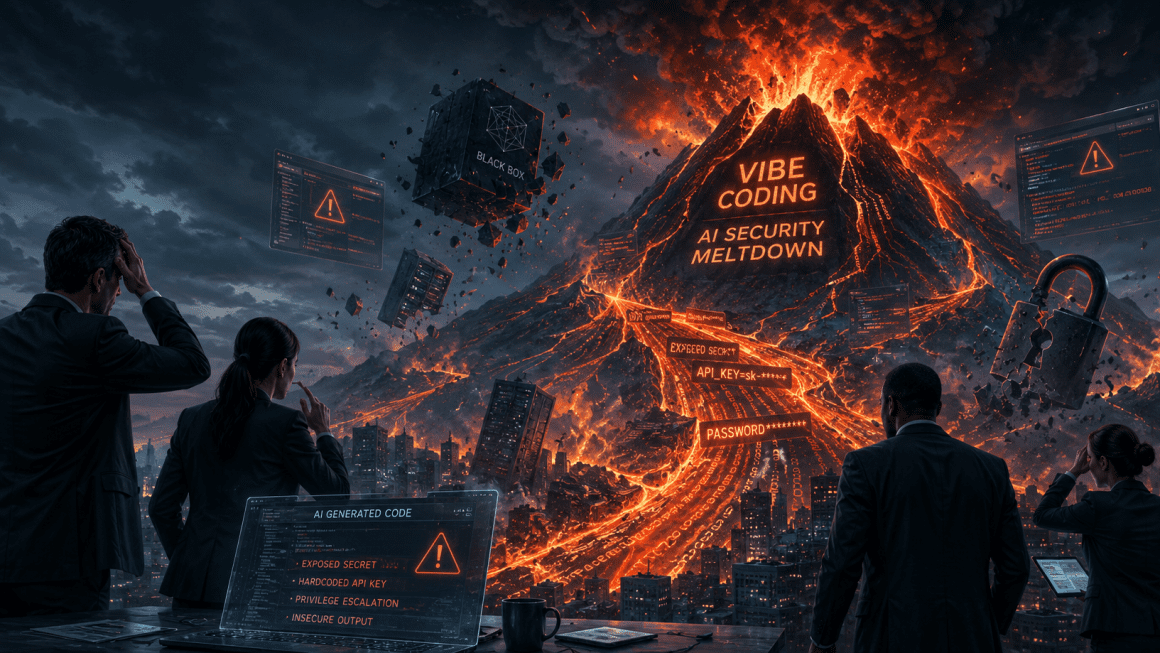

As a thought leader in the AI domain, my concerns echo through the corridors of innovation. I’ve observed a trend among many SaaS companies claiming to have tackled the challenges associated with shared tenancy models via the use of anonymizing data and using encryption. However, the reality often falls short of the assurances, leaving organizations vulnerable to potential risks. For example, if a model is asked by several staff members to draft a response to an email, the AI could potentially discover patterns in the content of all the emails being piped through the AI model (from staff in that organization) and then deduce from there, even though specifics on individuals has been redacted.

Data Security: A House of Cards?

Shared tenancy models walk a fine line when it comes to data security. While encryption layers are touted as safeguards, the intermingling of data from different organizations raises red flags. My qualms stem from the fact that, in practice, not all companies are as fortified against breaches as they profess to be. The shared nature of these models poses a risk – a breach in one organization’s data could ripple across the shared space, affecting others in the consortium.

Customization Woes: One Size Fits None:

A shared model, by its nature, tends to be a jack of all trades, master of none. My concerns deepen when considering the limited room for customization. Organizations, each with unique objectives, find themselves constrained by a model that might not align seamlessly with their specific needs. This lack of adaptability can stifle innovation and hinder the full potential of AI for individual organizations. A powerful integration of AI would mean the appropriate use of multiple different AI models or even better, a series of trained models that are tailored to the organization.

Siloed LLMs: An Oasis of Security and Customization:

Contrastingly, siloed LLMs stand as a beacon of security and tailored precision. As a thought leader in this space, I champion the cause of siloed models within secure containers for individual organizations. The benefits are clear – enhanced data security and the freedom to fine-tune the AI model according to the distinct requirements of each organization, coupled with the use of “Vector” storage, will dramatically improve the benefits of AI in a given use case.

Why Understanding Matters to You:

Now, why should you care about this AI showdown? As businesses increasingly integrate AI into their operations, your data security and the adaptability of the AI tools at your disposal matter. If you’re relying on a shared tenancy model, it’s vital to scrutinize the assurances provided by your AI service provider. I implore you to question whether your organization’s valuable data is truly shielded from potential breaches, and if the promised customization aligns with your unique goals. This may be hard to ascertain from your vendors, especially when their marketing and comms touts “Trust” but falls short on the delivery.

My Final Thoughts:

In the fast and ever-evolving landscape of AI, understanding the nuances between shared tenancy models and siloed LLMs is not just an academic exercise – it’s a practical necessity. My concerns about the nonchalant approach of many vendors are a call to action. As you navigate the AI terrain, consider the path that aligns best with your organization’s security and customization needs. In this journey of demystifying AI, your informed choices pave the way for a more robust, secure, and tailored AI experience.

If you would like to talk to an AI professional, please feel free to reach out to me (or the team at Sentia AI) so we can discuss your current and potential future state with AI.

matt.small@sentia.email