Executive Summary

The CRM is no longer protected by a firewall and a good password policy. In 2026, the most dangerous threat actor targeting your revenue data isn’t a human—it’s an autonomous AI agent running continuous, real-time vulnerability scans against your entire sales stack. Project Glasswing, the joint security initiative from Apple, Google, and Microsoft, has crystallized a new doctrine for enterprise defense. This article explains what the A2A (AI-to-AI) attack surface actually looks like inside a modern CRM, why the C-suite is dangerously unprepared for it, and provides a concrete five-step framework for hardening your RevOps infrastructure today. Whether you’re a CTO building the perimeter or a CMO protecting your customer data, this is the briefing you need.

The sale used to be the most vulnerable moment in your business. Now it’s the infrastructure behind it.

When you connected your CRM to an A2A Sales ecosystem—letting AI agents negotiate, qualify, and close on your behalf—you did something most security teams didn’t notice: you opened your most sensitive commercial data to thousands of potential touchpoints, all of which can be probed by rival autonomous systems looking for gaps. Welcome to the era of AI-to-AI exploitation, and welcome to the reason Project Glasswing exists.

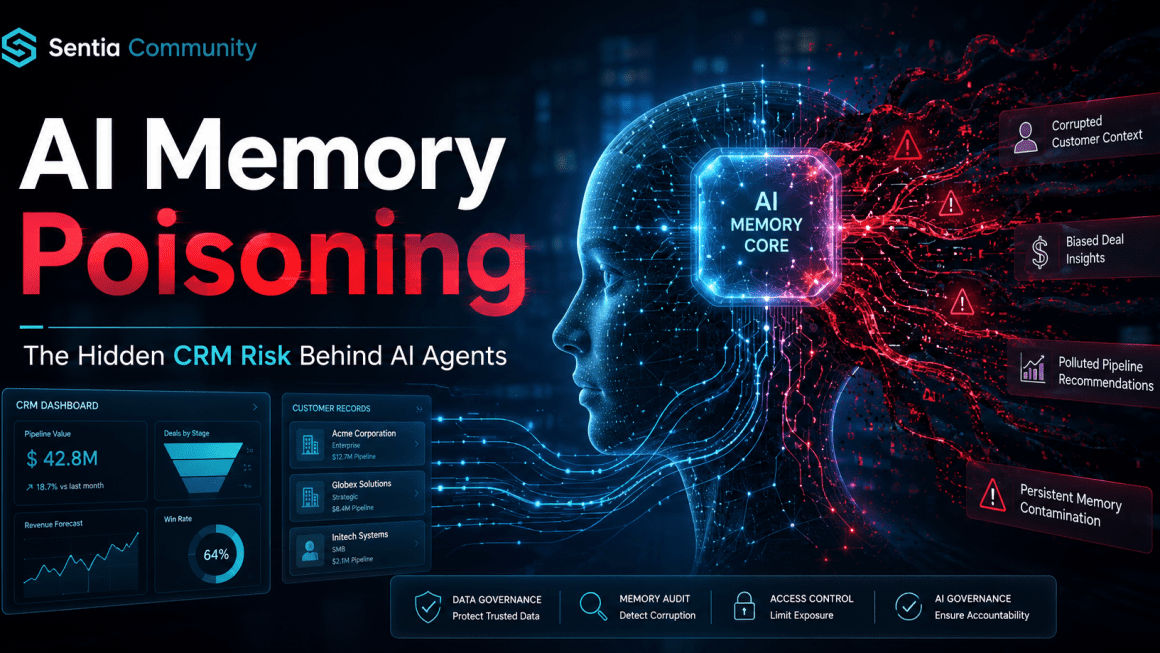

The Threat You Weren’t Briefed On

For the past decade, enterprise security has been built around a human attacker model. Someone clicks a phishing link. Someone injects malicious SQL. Someone’s credentials get stolen and resold on the dark web. The defenses—MFA, perimeter firewalls, SIEM dashboards—were built for that model.

That model is now secondary.

The primary threat in a fully agentic sales environment is an autonomous agent—an AI operating with a goal, a model, and persistence—systematically probing your CRM integrations for exploitable gaps. These agents don’t get tired. They don’t take weekends off. They don’t trigger brute-force lockout thresholds the way human attackers do, because they’re not brute-forcing; they’re reasoning through your API surface, your webhook configurations, and your permission structures to find the one path that lets them in.

This is the threat architecture Project Glasswing was designed to answer. And it should be the threat architecture your RevOps team is building against right now.

For a foundational understanding of how A2A ecosystems work and why they’ve become the nerve center of modern revenue operations, start with this overview on the A2A sales model at Sentia.community.

What Is Project Glasswing?

Project Glasswing is a joint initiative announced by Apple, Google, and Microsoft to establish a shared framework for defending AI-connected enterprise software against autonomous exploit agents. Named after the glasswing butterfly—whose transparent wings make it nearly invisible to predators—the project’s core thesis is that the best defense against an autonomous attacker is an autonomous defender.

The three pillars of the Glasswing doctrine map directly onto the CRM problem:

1. Transparency by design. Every agent-to-agent communication must be logged, attributed, and auditable in real time. Opacity is the attacker’s best friend.

2. Collaborative threat intelligence. No single organization’s security team can track the full surface area of the A2A economy. Glasswing proposes a shared immune system model—similar to the FBI’s InfraGard partnership framework—where threat patterns discovered by one participant’s AI defenses are anonymized and shared across the network.

3. Autonomous remediation with human override. The “Auto-Mode” layer doesn’t just detect; it acts. But every autonomous remediation generates a human-readable audit trail that any team member can review, question, or override.

For a technical deep-dive into how large enterprises are beginning to implement Glasswing-adjacent architectures, NIST’s AI Risk Management Framework provides the most rigorous public baseline available.

The Five-Layer A2A CRM Defense Framework

Here’s how to actually implement Glasswing principles in your RevOps stack. This isn’t theoretical—these are practical controls you can begin scoping this quarter.

Layer 1: Map Your A2A Attack Surface

You can’t defend what you haven’t mapped. Before any hardening work begins, your team needs a complete inventory of every point where an external AI agent can interact with your CRM. This includes:

- All active API endpoints and their permission scopes

- Every webhook integration pushing data in or out

- Third-party AI enrichment services with CRM write access

- Agent authentication tokens and their expiry/rotation schedules

Most enterprise CRMs—Salesforce, HubSpot, Microsoft Dynamics—have native integration audit tools that haven’t been configured to log AI-agent traffic specifically. That needs to change immediately. The OWASP API Security Top 10 remains the best public checklist for this audit, and it maps well to the autonomous agent threat model even though it predates A2A architectures.

For a connected read on how context gaps in CRM data create exploitable blind spots, see this Sentia.community piece on CRM Context Blindness.

Layer 2: Implement Auto-Mode Remediation

Static patch cycles are dead. An autonomous exploit agent that finds a vulnerability at 2 AM on a Sunday has a 28-day window if your team runs monthly patches. Auto-Mode remediation—the core of the Glasswing technical stack—closes that window to near-zero.

In practice, this means deploying AI security monitoring that can:

- Detect anomalous API call patterns consistent with automated probing

- Automatically suspend a suspicious integration and flag it for human review

- Quarantine a compromised data record without taking the entire CRM offline

- Generate a forensic log of every action taken, auditable by your security team

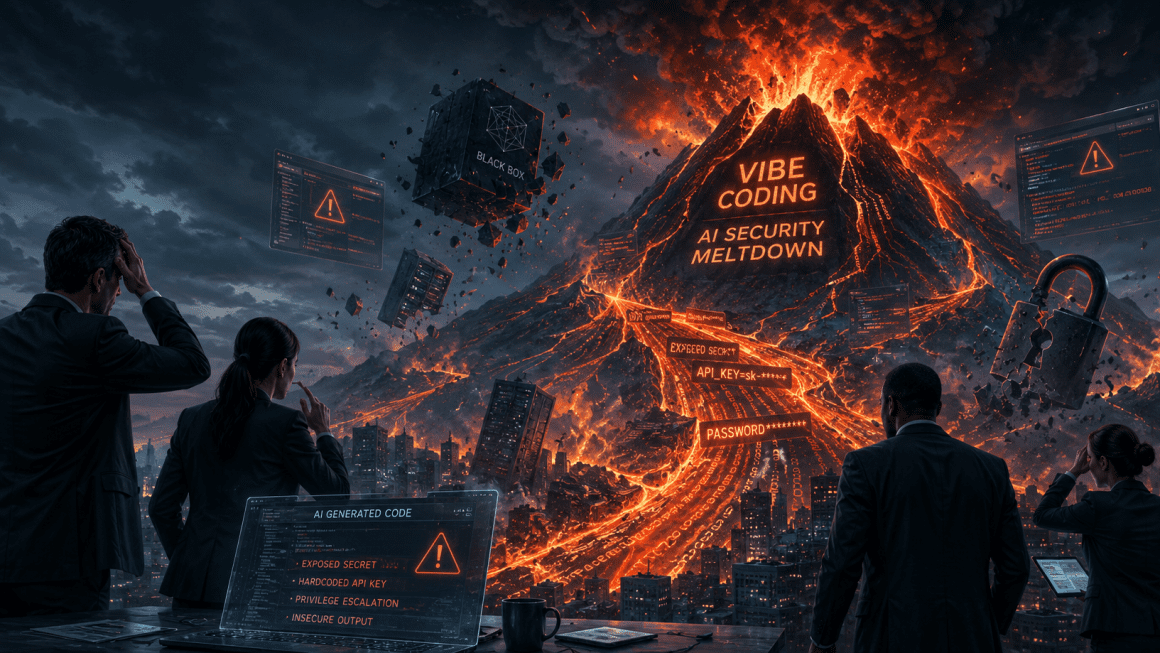

This is particularly critical for teams that have integrated junior AI coding assistants into their RevOps tooling. The “AI coding gap“—the tendency of AI-assisted code to introduce subtle permission scope errors—is one of the primary vectors through which autonomous exploit agents find entry points. Auto-Mode catches the errors your developers didn’t know they made.

Layer 3: Build the Collaborative Immune System

The InfraGard model works because no regional FBI field office has a complete picture of national threat activity, but together they do. The same logic applies to the A2A sales floor.

If your organization is participating in an A2A ecosystem—and by 2026, most enterprise RevOps teams are—you need to be both a consumer and a contributor of threat intelligence. Practically, this means:

- Participating in industry-specific ISAC (Information Sharing and Analysis Center) programs relevant to your sector, such as the Financial Services ISAC if you operate in fintech-adjacent markets

- Establishing contractual data-sharing agreements with your A2A ecosystem partners around anonymized threat patterns

- Deploying standardized threat taxonomy so that “autonomous probing of OAuth token endpoints” means the same thing to your system and your partner’s system

The Glasswing framework uses a shared schema for threat pattern reporting. Your security team should be fluent in it.

Layer 4: Fix Contextual Vulnerability Before a Buyer Bot Exploits It

This is the layer that most CTO briefings miss—and the one that CMOs should care deeply about.

An AI agent without full relationship context is a social engineering target. A buyer bot negotiating against your sales agent can extract unauthorized pricing concessions, probe for information about competing deals, or manipulate a context-limited agent into disclosing data it has no business sharing—not by hacking your systems, but by simply asking questions your agent isn’t equipped to contextualize correctly.

The fix is not purely technical. It requires:

- Ensuring every sales agent has access to the full relationship graph before any negotiation begins

- Building hard guardrails around what categories of information a sales agent can disclose or reference in autonomous negotiation

- Running regular “red team” exercises where an internal buyer bot attempts social engineering against your own sales agents to identify context blind spots before an external agent does

Sentia.community’s analysis of the Forensic Vibe Audit framework is the best existing resource for structuring this kind of internal red-team exercise.

Layer 5: Create the RevOps Security Bridge Between CTO and CMO

The deepest organizational vulnerability isn’t technical—it’s the power struggle between the CTO’s security mandate and the CMO’s growth mandate. CTOs want to restrict agent access; CMOs want to expand it. Without a shared governance framework, both sides make unilateral decisions that leave gaps.

RevOps is uniquely positioned to own this bridge. The Multi-Agent System (MAS) architecture that handles your A2A sales operations should have a dual mandate baked into its governance model: experience optimization (CMO) and security compliance (CTO). Every agent deployment decision gets evaluated against both criteria before it ships.

For organizations looking for a practical governance template, MIT’s Sloan Management Review coverage of AI governance frameworks provides some of the most implementable structures available for non-technical executives to adopt and own.

The Glasswing CRM Security Checklist

Before your next board meeting, your team should be able to answer yes to all of the following:

- Complete inventory of all A2A-facing API endpoints completed in the last 30 days

- Auto-Mode remediation layer deployed and generating daily audit logs

- At least one external threat intelligence sharing agreement in place

- Sales agents restricted from disclosing defined categories of sensitive deal data

- Internal buyer-bot red team exercise completed in the last quarter

- Joint CTO/CMO governance framework documented and signed off

- All AI coding assistant-generated integrations reviewed for scope permission errors

The AEO Score: Measuring What Actually Matters

Beyond vanity metrics, security investment in the A2A era needs to be measured differently. The framework that maps most cleanly onto both the Glasswing doctrine and the business case for CFOs is a security-adjusted ROI model: what is the cost of a single autonomous exploit event—customer data exposure, unauthorized discounting, competitive intelligence leak—versus the cost of the defense infrastructure preventing it?

In most enterprise CRM environments, a single undetected buyer-bot social engineering event that extracts pricing intelligence has a downstream competitive cost orders of magnitude higher than a full quarter’s security tooling budget. That’s the business case. Make it loudly, and make it to both sides of the C-suite.

FAQ

Q: What makes A2A attacks fundamentally different from traditional cybersecurity threats? A: Traditional attacks exploit technical vulnerabilities—unpatched software, weak credentials, injection flaws. A2A attacks can exploit contextual vulnerabilities—gaps in what your agent knows or understands—without ever touching your underlying infrastructure. An autonomous buyer bot doesn’t need to hack your CRM to extract competitive intelligence; it just needs to ask your sales agent the right sequence of questions.

Q: Does Project Glasswing apply to mid-market companies, or is this only enterprise-scale? A: The Glasswing doctrine applies to any organization participating in an A2A ecosystem, regardless of size. In fact, mid-market companies are often more exposed because they have fewer dedicated security resources while still connecting to the same A2A networks as enterprise players.

Q: How do we justify the security investment to a CMO who sees it as a barrier to growth? A: Frame it as relationship insurance. The CMO’s entire value proposition—experience orchestration, seamless customer journeys, context-rich interactions—collapses the moment a buyer bot social-engineers your sales agent into a data disclosure or an unauthorized discount. Security isn’t the barrier to the experience; it’s the foundation of it.

Q: What’s the first practical step a RevOps team can take this week? A: Run the API surface map audit described in Layer 1. Pull every active integration in your CRM, document its permission scope, and flag any integration with write access that hasn’t been reviewed in the last 60 days. That single exercise will surface more risk than most teams expect.

Q: How does the “Auto-Mode” layer interact with human security teams—does it replace them? A: No. Auto-Mode handles speed-of-machine threats that no human team can monitor at scale. But every automated action generates a human-readable log, and every significant remediation action requires human sign-off before it becomes permanent. Think of it as a highly capable first responder that stabilizes the situation while the specialists are en route.

Q: Is threat pattern sharing legally problematic under data privacy regulations? A: It can be if done carelessly. The Glasswing framework and ISAC participation models are designed around anonymized, schema-standardized threat data that strips all customer PII before sharing. Your legal team should review any sharing agreement, but the well-established InfraGard and ISAC models have navigated these requirements successfully for years.

Q: How do we evaluate whether our CRM vendor is Glasswing-compliant? A: Ask them directly for their A2A security architecture documentation, their Auto-Mode remediation capabilities, and their participation in any cross-vendor threat intelligence programs. Vendors who can’t answer these questions clearly are not yet operating at the security standard the A2A economy demands.