Executive Summary

The “Consent Reckoning” of 2026 represents a critical inflection point for the global Artificial Intelligence industry. As organizations transition from pilot programs to production at scale, they must navigate a landscape where legacy data practices are no longer legally defensible.

- Algorithmic Disgorgement: The Federal Trade Commission (FTC) is increasingly mandating “model deletion” for AI assets built on non-consensual data.

- Regulatory Maturity: The EU AI Act becomes fully applicable in August 2026, imposing strict conformity assessments for high-risk RevOps systems.

- Financial Scrutiny: Forrester predicts that enterprises will defer 25% of planned AI spend as CFO oversight demands clear, non-speculative ROI.

- Architecture Shift: The rise of Privacy-First CRM and Data Lineage tools is essential to avoid the “legally toxic” classification of enterprise datasets.

- Agentic Evolution: Agentic AI is replacing static automation, requiring autonomous systems that are both self-correcting and compliant.

- Security Risks: Gartner forecasts that 40% of AI-related data breaches by 2027 will stem from cross-border Generative AI misuse.

The Rise of Legally Toxic AI

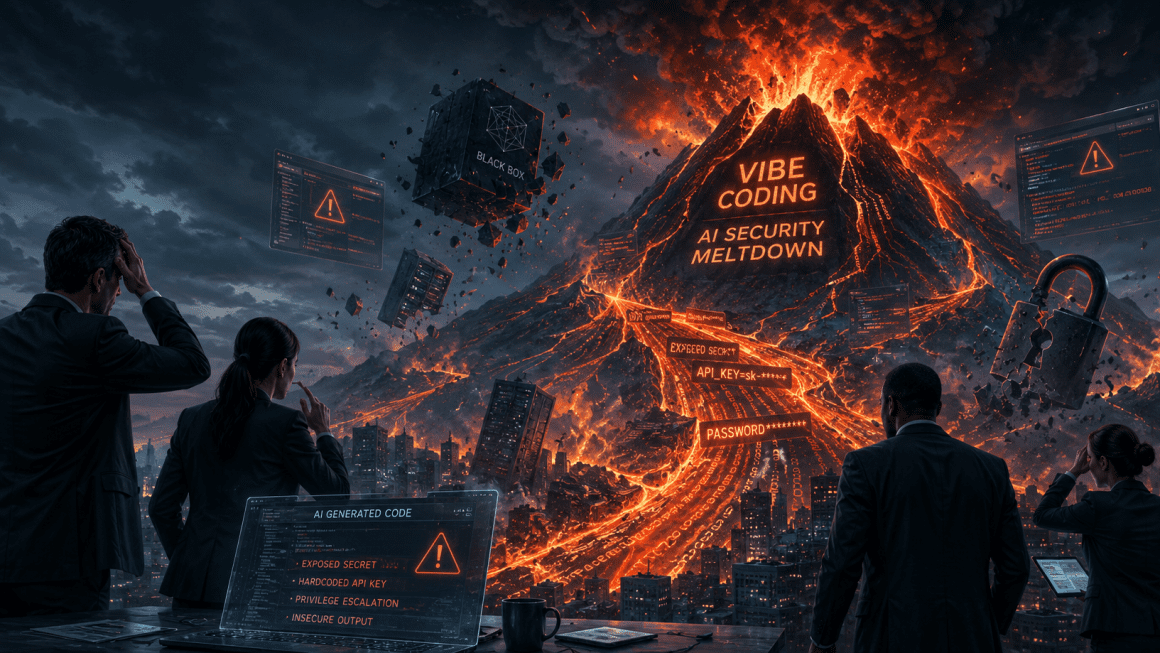

We have entered a “wild west” era of legislation where the biggest threat to any CRM strategy is not the technology—it is the legal fallout. For years, businesses have fed decades of customer data into Large Language Models (LLMs) without explicit consent for “AI processing.” This oversight has created a reservoir of “legally toxic” models that are now considered liabilities by regulatory bodies.

Most organizations assumed that broad “service improvement” clauses in legacy privacy policies covered the development of Neural Networks. However, 2026 regulators have clarified that training machine learning models is a distinct, high-risk activity requiring specific disclosure. Companies are discovering that their most valuable intellectual property may be one lawsuit away from a total deletion order.

The focus of the C-suite has shifted from data volume to data purity. Leaders must recognize that a model’s long-term value is tethered to its Data Lineage and the ethical provenance of its training sets. Organizations that continue to ignore these compliance boundaries are operating on borrowed time.

Algorithmic Disgorgement: The Nuclear Option

The most significant shift in enforcement is the rise of Algorithmic Disgorgement. This remedy requires companies to delete not only the illegally obtained data but also any weights and parameters derived from that data. The FTC has pioneered this approach as a way to strip firms of the competitive advantage gained through deceptive harvesting.

A landmark March 2026 settlement involving Match Group and OkCupid highlights the retroactive risk to legacy datasets. The FTC alleged that OkCupid shared nearly 3 million user photos and location tags with an outside firm, Clarifai, to train facial recognition software. Because this data transfer occurred without informing consumers, the resulting models were declared non-compliant.

Retraining a state-of-the-art model to satisfy a disgorgement order is often financially ruinous. While researchers are exploring Machine Unlearning to remove specific data influences, these techniques often cause Concept Drift, making the model’s performance unpredictable. This makes proactive compliance the only sustainable strategy for RevOps leaders.

The 2026 Regulatory Landscape

The EU AI Act is now the global benchmark for AI governance, having entered full applicability in August 2026. It classifies AI systems based on their potential for harm, with “high-risk” applications facing mandatory documentation and human oversight. This includes systems used for recruitment, lead scoring, and automated financial decisions.

In the United States, a complex patchwork of state laws like the CCPA and CPRA continues to evolve. California’s SB 351, effective January 1, 2026, has further fortified the “Corporate Practice of Medicine” doctrine. This law prohibits management platforms from using AI to exercise undue control over clinical decisions or diagnostic protocols.

Organizations must also account for the stand taken by global knowledge commons. Wikipedia has officially banned the use of LLMs for generating or rewriting article content to protect the neutrality of the human-led ecosystem. This move signals a broader trend where “scraped” data is becoming a significant legal and ethical liability.

The Market Correction and CFO Mandate

By early 2026, the era of experimental “AI Hype” has officially ended. Forrester reports that enterprises are deferring 25% of their planned AI spend into 2027 as they recalibrate for ROI. CEOs are now leaning on their CFOs to approve only those projects with measurable financial growth or efficiency gains.

| Paradigm | 2024 Innovation Era | 2026 Financial Rigor |

|---|---|---|

| Approval Body | CTO / Innovation Lab | CFO / Chief Privacy Officer |

| Primary Metric | Token Speed / Accuracy | ROI / Net Revenue Retention |

| Risk Tolerance | “Move Fast, Break Things” | “Sovereign & Compliant” |

This rigor is driving the adoption of “Neoclouds”—specialized cloud providers that focus on high-performance GPUs and sovereign AI. These platforms allow organizations to maintain strict control over where their data is processed and stored.

Agentic AI: Autonomous RevOps Workflows

The focus of 2026 has shifted from conversational chatbots to Agentic AI. Unlike static automation, an Agentic AI system can reason about a goal, plan a sequence of actions, and execute them across multiple systems. According to Gartner, 40% of enterprise applications will embed task-specific agents by the end of 2026.

These agents are particularly transformative for Revenue Operations. An agentic system doesn’t just flag a stalled deal; it analyzes sentiment, identifies missing stakeholders, and triggers a personalized re-engagement sequence. This autonomy allows RevOps teams to focus on relationship strategy while the AI handles high-velocity execution.

However, the autonomy of Agentic AI requires new governance frameworks. Organizations are implementing TRiSM (Trust, Risk, and Security Management) to ensure that agents operate within ethical guardrails. By 2026, enterprises using TRiSM controls are predicted to consume 50% less illegitimate or inaccurate information.

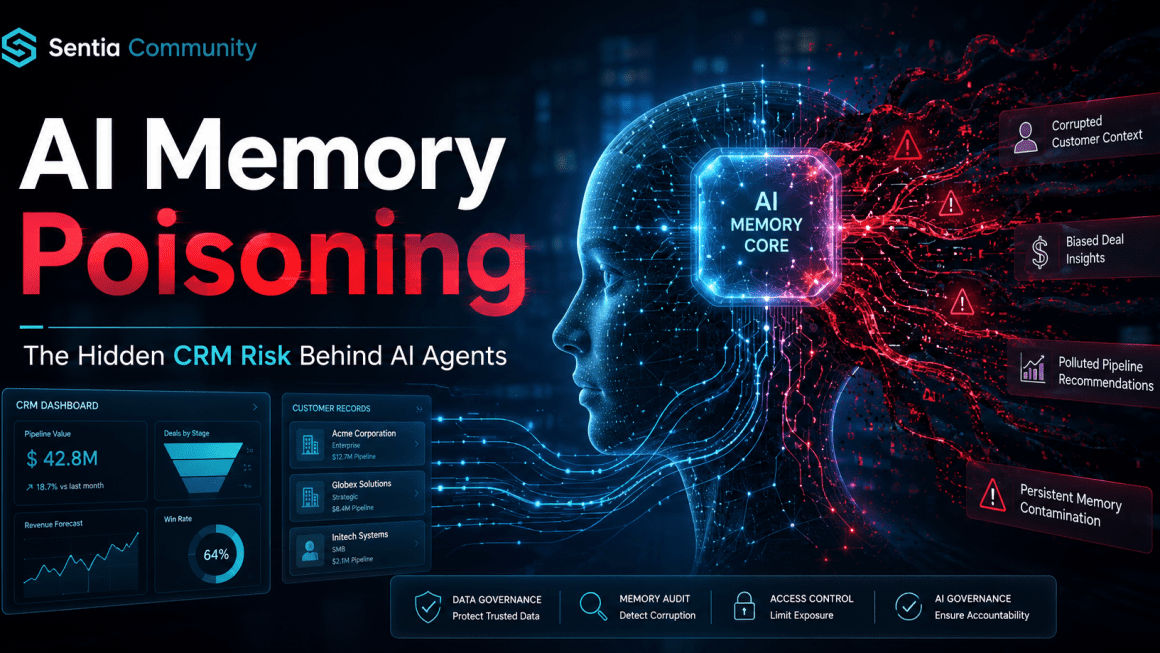

Privacy-First CRM and Data Lineage

To survive the “Consent Reckoning,” the transition to a Privacy-First CRM is mandatory. This architecture prioritizes transparent collection and auditable Data Lineage. It moves away from the “data lake” model toward a system where every customer record is tagged with its specific AI processing permissions.

Harvard Business Review notes that while 80% of leaders believe high-quality data is essential for AI, only 10% feel their organizations are “completely ready.” A compliant 2026 architecture must include:

- Granular Consent Tagging: Distinguishing between data used for inference and data used for model training.

- Differential Privacy: Adding mathematical noise to datasets to protect individual identities during analysis.

- Sovereign Infrastructure: Ensuring that data for specific regions stays within those geographic and legal boundaries.

Strategy: Building Trustworthy AI

The goal for the next 24 months is the deployment of “Trustworthy AI” that serves as a sentinel for privacy rather than an intruder. Organizations must integrate privacy, cybersecurity, and AI governance into a single, unified enterprise risk structure. Siloing these disciplines is now considered a high-risk operational failure.

Senior leadership should prioritize the following:

- Audit Legacy Models: Identify and mitigate risks in any model trained on data collected before 2024.

- Update Vendor MSAs: Revise contracts to explicitly address AI training rights and the allocation of liability for model disgorgement.

- Pivot to ROI-Centric AI: Stop funding speculative pilots; focus on agentic workflows that drive Net Revenue Retention (NRR).

- Implement Explainability: Use tools like LIME or SHAP to ensure AI decisions are transparent and auditable.

As the era of volatility continues, the organizations that recalibrate their investments under tight legal scrutiny will be the only ones to thrive.

10-Part FAQ

1. What is “Algorithmic Disgorgement” and why is it dangerous?

Algorithmic disgorgement is a legal remedy where a regulator, like the FTC, forces a company to delete an AI model built on improperly obtained data. It is considered the “nuclear option” because it can destroy years of R&D and render your core product or operational system useless.

2. How does the EU AI Act impact U.S. SaaS companies in 2026?

If a U.S. company provides AI services to European citizens, it must comply with the EU AI Act. This involves categorizing the AI’s risk level and ensuring that “high-risk” systems—like those used in recruitment or financial scoring—meet strict transparency and security standards.

3. What makes an AI model “legally toxic”?

An AI model is “legally toxic” if it was trained using personal data without explicit, informed consent for that specific use. Legacy “service improvement” clauses are generally insufficient in 2026. If the training data is tainted, the model becomes a regulatory liability subject to deletion.

4. Why is Forrester predicting a 25% deferral in AI spending?

Enterprises are slowing down because they cannot link speculative AI pilots to actual financial growth. CFOs are now requiring strict ROI proof before approving new production deployments, leading to a market correction where “hype-based” spending is replaced by rigorous financial accountability.

5. What is the difference between standard automation and Agentic AI?

Standard automation follows rigid “if/then” rules. Agentic AI is autonomous and goal-oriented; it can reason about a task, plan its own steps, and adapt its behavior based on the outcomes it sees. It functions more like a digital employee than a script.

6. How can a Privacy-First CRM prevent model deletion orders?

A Privacy-First CRM provides clear “data lineage,” allowing you to prove exactly when and how consent was obtained for every piece of data in your model. This audit trail protects your AI assets from being declared “ill-gotten” during regulatory investigations or litigation.

7. Is it still safe to use web-scraped data for AI training?

Scraped data is increasingly risky. Platforms like Wikipedia have banned AI-generated content and prohibited LLMs from rewriting their articles to protect human scholarship. Using scraped data from platforms that have explicitly opted out can lead to “legally toxic” model designations.

8. What are the penalties for violating the EU AI Act?

Penalties for severe non-compliance can reach €35 million or up to 7% of a company’s annual global turnover, whichever is higher. Beyond fines, regulators can also ban the use of the AI system entirely within the European Union, leading to a total loss of market access.

9. What is “Machine Unlearning” and can it replace retraining?

Machine unlearning is a technical attempt to remove the influence of specific data from a trained model without a full retrain. However, it is still experimental and often leads to “Concept Drift,” where the model’s performance becomes unpredictable and potentially useless for business operations.

10. How should a CMO respond to the “Consent Reckoning”?

CMOs should immediately audit their data pipelines to identify any “toxic” legacy data. They must pivot to “clean,” first-party data strategies and work closely with legal and technical teams to ensure that all future AI initiatives are built on a foundation of explicit consent.

David Brown | CCO & Startup AI Investor

David Brown | CCO & Startup AI Investor